Control Award Updates

Tags: controlPersonhours: 3

Task:

In the past few months we've made a lot of improvements and updates to our robot and code. For example, we changed our gripper system again; it now includes an internal which makes it easier to despite out collected glyphs into the cryptobox. So we have decided to update our control award submission to reflect these changes.

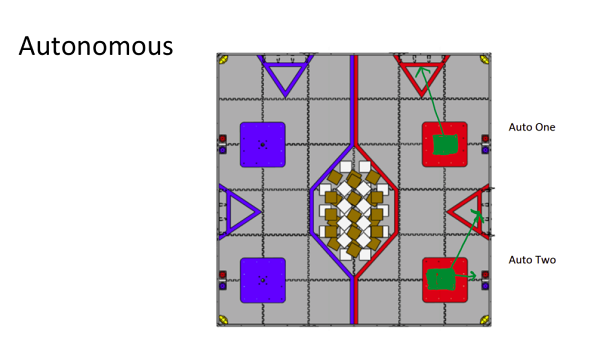

Autonomous Objective:

- Knock off opponent's Jewel, place glyphs in correct location based on image, park in safe zone (85 pts)

- Park in Zone, place glyph in cryptobox (25 pts)

Autonomous B has the ability to be delayed for a certain amount of time, allowing for better coordination with alliance mates. If our partner team is more reliable, we can give them freedom to move, but still add points to our team score.

Sensors Used

- Phone Camera - Allows robot to determine where to place glyphs using Vuforia, taking advantage of the wide range of data provided from the pattern detection, as well as using Open Computer Vision (OpenCV) to analyze the pattern of the image.

- Color Sensor - Robot selects correct jewel using the passive mode of the sensor. Feedback determines whether the robot needs to move forwards or backwards to knock off opposing team's jewel.

- Inertial Measurement Unit (IMU) - 3 Gyroscopes and Accelerometers return robot’s heading for station keeping and straight-line driving in autonomous, while orienting ourselves to specific headings for proper navigation, crypt placing, and balancing.

- Motor Encoders - Returned motor odometry tracks how many rotations the wheels have made and converts into meters travelled. In combination with feedback from the IMU, can calculate location on the field relative to starting point.

Key Algorithms:

- Integrate motor odometry, IMU gyroscope, and accelerometer with trigonometry so robot knows its location at all times.

- Uses Proportional/Integral/Derivative (PID) combined with IMU readouts to maintain heading, corrects any differences between actual and desired heading at power level appropriate for difference and amount of error built up. Allows us to navigate the field accurately during autonomous.

- Vuforia to tracks and maintains distance from patterns on wall based on robot controller phone's camera, and combines 2 machine vision libraries, trigonometry, and PID motion control.

- All code is non-blocking, allowing multiple operations to happen at the same time. Extensively use state machines to prevent conflicts over priorities in low-level behaviors.

Driver Controlled Enhancements:

- Internal Lift System is a conveyor-belt-like system that moves blocks from the bottom the grippers to the top and makes it easier for the drivers to deposit the glyphs in the cryptobox.

- If the lift has been raised, jewel arm movement is blocked to avoid a collision.

- The robot's slow mode allows our drivers to accurately maneuver around the field as well as gather glyphs easily and accurately.

- The robot also has a turbo mode. This speed is activated when the bumper is pressed, allowing the driver to quickly navigate the field.

Date | February 6, 2018